AI is being introduced into regulated environments at an accelerating pace. From quality management to risk monitoring, organizations are under pressure to adopt more advanced analytics and automation. But a critical issue is starting to surface, one that has little to do with performance and everything to do with accountability. When a system makes a decision, can that decision be explained, defended, and audited?

In regulated industries, that question is not theoretical. It is operational.

The Problem Isn’t Intelligence, It’s Accountability

There is a quiet assumption driving most AI adoption strategies. If a model is accurate, it is valuable. That logic works in environments where outcomes matter more than process. It breaks down in industries where decisions must be justified, documented, and traceable.

A quality deviation, a risk score, or a compliance alert is not just an output. It is something that may need to be reviewed by auditors, regulators, or internal governance teams. If the reasoning behind that output cannot be clearly understood, the organization inherits risk rather than reducing it.

This is where black box AI becomes problematic. Not because it lacks capability, but because it lacks transparency.

Where Black Box AI Collides With Regulation

Black box models operate in a way that obscures how decisions are made. Even when they perform well, they introduce a disconnect between outcome and explanation. In a regulated context, that disconnect creates friction.

When an auditor asks why a deviation was flagged or why a decision was made, “the system determined it” is not a sufficient answer. The organization must show how data, process context, and rules contributed to that outcome. Without that visibility, accountability becomes unclear, and governance starts to weaken.

More subtly, black box systems make change difficult. Regulations evolve. Processes change. Risk profiles shift. If the logic behind decisions cannot be understood, it becomes extremely difficult to assess the impact of those changes across the organization.

What initially looks like a powerful tool can quickly become something that cannot be safely adapted.

Explainability Changes the Role of AI Entirely

Explainable AI is often discussed as a technical improvement, as if it simply makes models easier to interpret. In regulated environments, it does something more fundamental. It allows AI to participate in governance.

When decisions can be traced back to inputs, process steps, and contextual relationships, they can be reviewed, challenged, and improved.

AI outputs stop being isolated signals and become part of a broader decision-making structure.

This shift matters because regulated organizations do not operate on isolated insights.

They operate on interconnected systems where processes, risks, controls, and compliance obligations are tightly linked.

Explainability makes it possible for AI to exist within that system rather than outside of it.

Why Most AI Initiatives Stall in Regulated Environments

Many organizations approach AI as a layer they can add on top of existing systems. Models are introduced, insights are generated, and dashboards are built. But something doesn’t quite connect.

The issue is not the AI itself. It is the absence of an underlying structure that ties those insights back to how the organization actually operates.

Without a clear connection to processes, roles, risks, and controls, AI outputs remain abstract. They can inform, but they cannot drive governed action. This is why many initiatives produce interesting insights but fail to translate into operational improvement or compliance value.

The gap is architectural.

Where Interfacing Moves Explainability From Theory to Execution

Most platforms talk about explainability at the model level. The real challenge is operational explainability, being able to trace a decision through the business itself.

This is where Interfacing becomes materially different. Because the platform structures the organization as a connected operating model, explainability is not something added to AI. It is a property of how the system works.

Consider a quality deviation.

In a typical environment, an AI model might flag an anomaly. What happens next is manual investigation. Teams try to understand what process failed, which control was ineffective, and who is responsible. The insight exists, but the explanation has to be reconstructed.

In Interfacing, that same event is already anchored in context.

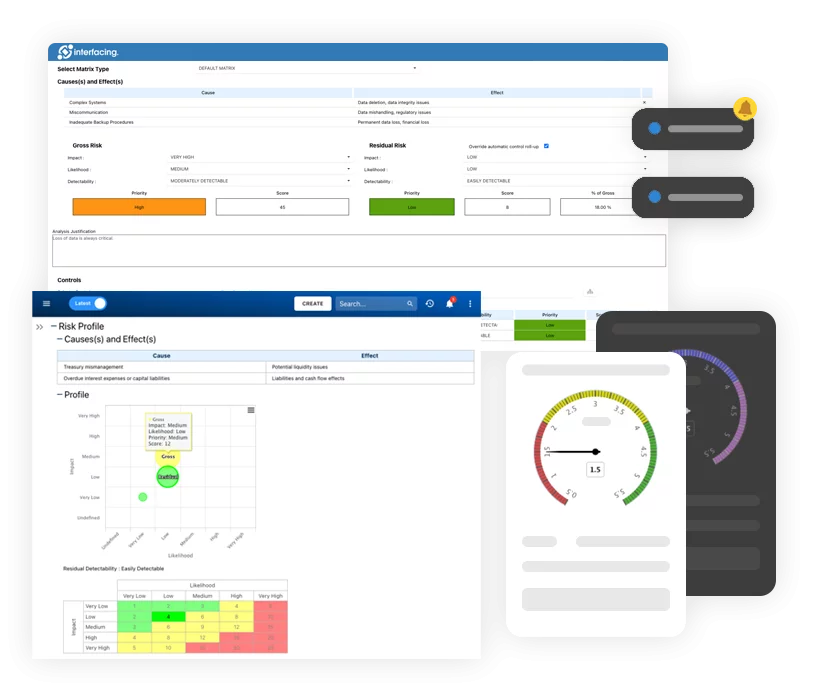

The deviation is linked directly to:

- the process where it occurred

- the associated risks and controls

- the governing procedures and documents

- the responsible roles and stakeholders

Because these relationships are modeled in the system, the “why” behind the issue is not inferred after the fact, it is visible immediately.

This is what makes explainability operational.

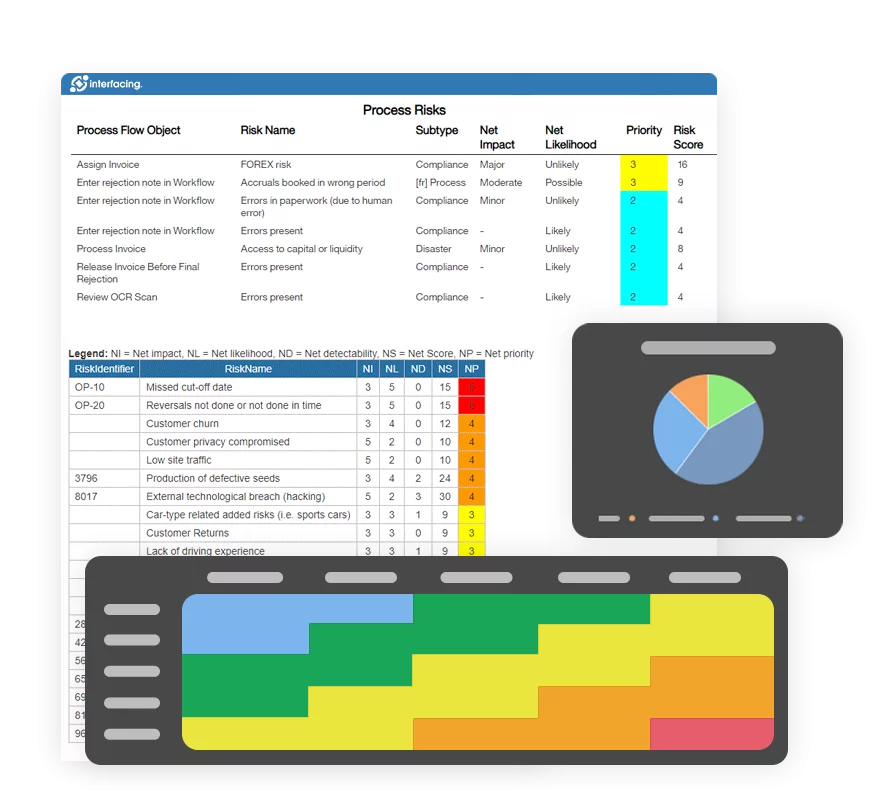

The platform’s ability to connect processes, risks, controls, data, and regulatory requirements into a single repository allows organizations to perform real impact analysis rather than guesswork .

Now extend that thinking to regulatory change.

When a regulation is updated, most organizations perform impact assessments manually. They search documents, identify affected processes, and try to determine downstream effects.

With Interfacing, that analysis becomes structured.

Because regulations, processes, controls, and assets are already linked, the system can:

- identify impacted processes

- highlight affected controls and risks

- trigger workflow-based reviews and approvals

This is not just automation. It is explainable change management. Every decision, every update, every approval is traceable and recorded.

The same principle applies to audit readiness.

Instead of preparing evidence reactively, organizations operating within Interfacing maintain:

- full audit trails of changes

- version-controlled documentation

- traceable workflows and approvals

These capabilities are built into the platform’s governance layer, including audit logs, version control, and validated system controls designed for regulatory environments .

This means that when an auditor asks how a decision was made, the answer is not reconstructed. It is already documented within the system.

From Insight to Action, The Missing Link

One of the most common gaps in AI adoption is the distance between insight and execution. A model may identify a risk or predict an issue, but the organization still needs to decide what to do next, who is responsible, and how that action is tracked.

In a governance-driven environment, that transition is built in.

When insights are connected to workflows, corrective actions can be initiated, tracked, and validated within the same system. Decisions are not only made, they are recorded, traceable, and auditable. Over time, this creates a feedback loop where both processes and decision-making improve.

This is where explainability proves its real value. It is not just about understanding decisions after the fact. It is about enabling decisions to be managed as part of an ongoing operational system.

Regulation Is Moving Toward Transparency, Not Away From It

Regulatory expectations are evolving in a way that reinforces this direction. Across industries, there is increasing emphasis on data integrity, auditability, and system validation. Organizations are expected to demonstrate not only that they are compliant, but how they maintain compliance on an ongoing basis.

This trend does not favor opaque systems.

Even if regulations do not explicitly mandate explainable AI, they require the outcomes that explainability makes possible. Traceable decisions, clear audit trails, and accountable processes are not optional. They are foundational.

Rethinking the Question

The conversation around AI often focuses on capability. How advanced is the model? How accurate are the predictions?

In regulated industries, those are secondary questions.

The primary question is whether decisions can be governed.

Black box AI struggles in that environment because it separates intelligence from accountability. Explainable, process-integrated AI brings them back together.

Conclusion

AI is not inherently incompatible with regulated industries. But the way it is implemented determines whether it becomes an asset or a liability.

When AI operates without context, it introduces uncertainty. When it is embedded within a structured, governed system, it enhances visibility, improves decision-making, and strengthens compliance.

Explainability is not a feature that organizations can choose to add later. It is a prerequisite for using AI responsibly in environments where every decision must stand up to scrutiny.

Why Choose Interfacing?

With over two decades of AI, Quality, Process, and Compliance software expertise, Interfacing continues to be a leader in the industry. To-date, it has served over 500+ world-class enterprises and management consulting firms from all industries and sectors. We continue to provide digital, cloud & AI solutions that enable organizations to enhance, control and streamline their processes while easing the burden of regulatory compliance and quality management programs.

To explore further or discuss how Interfacing can assist your organization, please complete the form below.

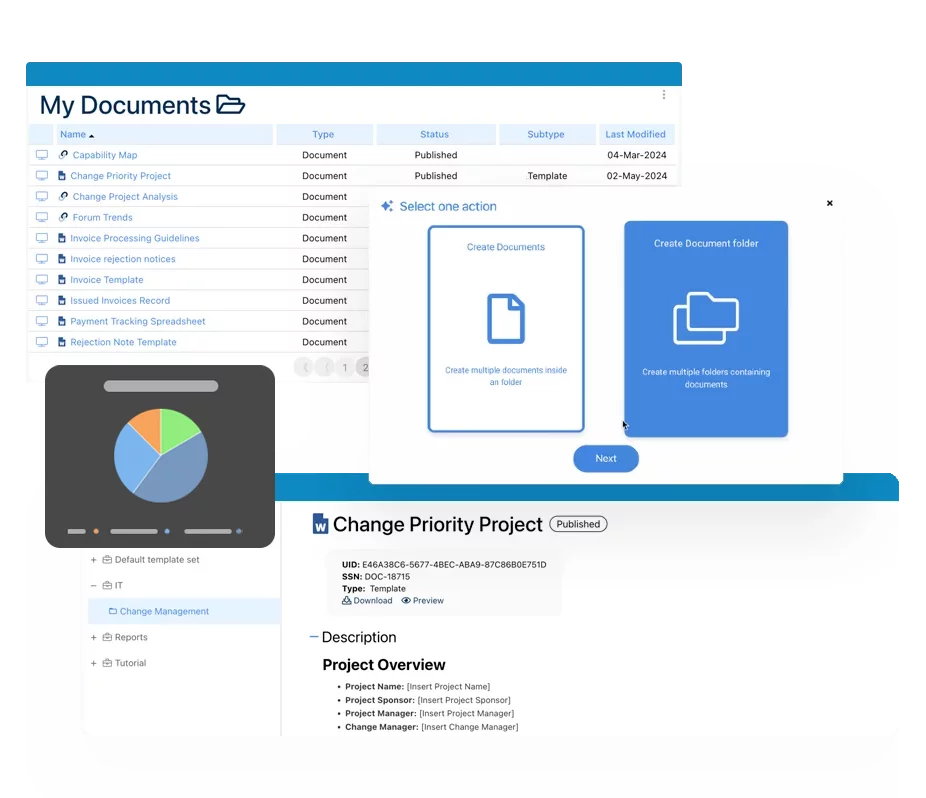

Documentation: Driving Transformation, Governance and Control

• Gain real-time, comprehensive insights into your operations.

• Improve governance, efficiency, and compliance.

• Ensure seamless alignment with regulatory standards.

eQMS: Automating Quality & Compliance Workflows & Reporting

• Simplify quality management with automated workflows and monitoring.

• Streamline CAPA, supplier audits, training and related workflows.

• Turn documentation into actionable insights for Quality 4.0

Low-Code Rapid Application Development: Accelerating Digital Transformation

• Build custom, scalable applications swiftly

• Reducing development time and cost

• Adapt faster and stay agile in the face of

evolving customer and business needs.

AI to Transform your Business!

The AI-powered tools are designed to streamline operations, enhance compliance, and drive sustainable growth. Check out how AI can:

• Respond to employee inquiries

• Transform videos into processes

• Assess regulatory impact & process improvements

• Generate forms, processes, risks, regulations, KPIs & more

• Parse regulatory standards into requirements

Request Free Demo

Document, analyze, improve, digitize and monitor your business processes, risks, regulatory requirements and performance indicators within Interfacing’s Digital Twin integrated management system the Enterprise Process Center®!

Trusted by Customers Worldwide!

More than 400+ world-class enterprises and management consulting firms